According to the CMI Content and Marketing Trends, 95% of B2B marketers now use AI-powered tools in their content workflows. That number sure sounds like the industry has figured out where AI fits, but then again, only 39% of those teams report that AI has actually improved their content performance.

So what we have now is early universal adoption… with not so great results. There’s a serious disconnect there, and that’s because most marketing teams have not built the piece that makes the investment pay off.

More Output, Same Problems

When companies add AI to their content process, they tend to get more of what they already had. If the brand voice was inconsistent before, AI just produces inconsistent content faster. If the editorial standards were informal (or missing altogether), AI fills the vacuum with its own defaults, and those sound like everyone else's.

Yes, the speed of content delivery improved. But the quality did not, because nobody defined what quality meant in the first place.

The way most teams are spending in 2026 tells you this is not getting fixed anytime soon.

The Infrastructure Nobody (Wants to) Budget For

In the same survey, when B2B marketers were asked where they planned to increase spending in 2026, 45% said AI-powered marketing tools. Only 9% said training and capability building. Companies are buying faster tools while giving nobody the training to use them properly.

That missing investment is editorial standards.

Standards include documented voice and tone guidelines specific enough that a new writer or an AI tool can follow them without guessing. You also need mechanical style rules that prevent the small inconsistencies that erode credibility over time. A defined review process with clear checkpoints, so drafts are evaluated against the same criteria every time. And they require something AI cannot replace on its own: human editorial judgment applied at every stage.

We have written before about the credibility gap that opens when AI-generated content floods a channel without editorial oversight. This is the operational side of that same problem. Without a system that defines what your brand sounds like, and a person who enforces it, every piece of content your team produces is a coin flip and can end up sounding exactly the same as everything else published online.

Your Readers Can Tell

Readers are getting sharper at recognizing content that lacks a distinct point of view, and they are responding with their attention.

A 2025 study from the Nuremberg Institute for Market Decisions tested this directly. Researchers showed 3,000 consumers across the US, UK, and Germany identical ad content, labeling half as AI-generated and half as human-made. The content labeled as AI-generated was rated as less natural, less useful, and less engaging, and consumers were less willing to research or purchase the product

The content was exactly the same, but the perception changed.

The risk is not that your audience will run your blog post through a detection tool. It’s actually subtler than that. When content feels generic, when it reads like it could have been published by any company in your category, readers disengage. They scroll right on past, and once a reader mentally files your brand under "same as everyone else," that is a category you do not recover from easily.

A distinctive voice protects your audience and your revenue, and you cannot maintain it without standards.

The Boring Stuff That Makes Everything Else Work

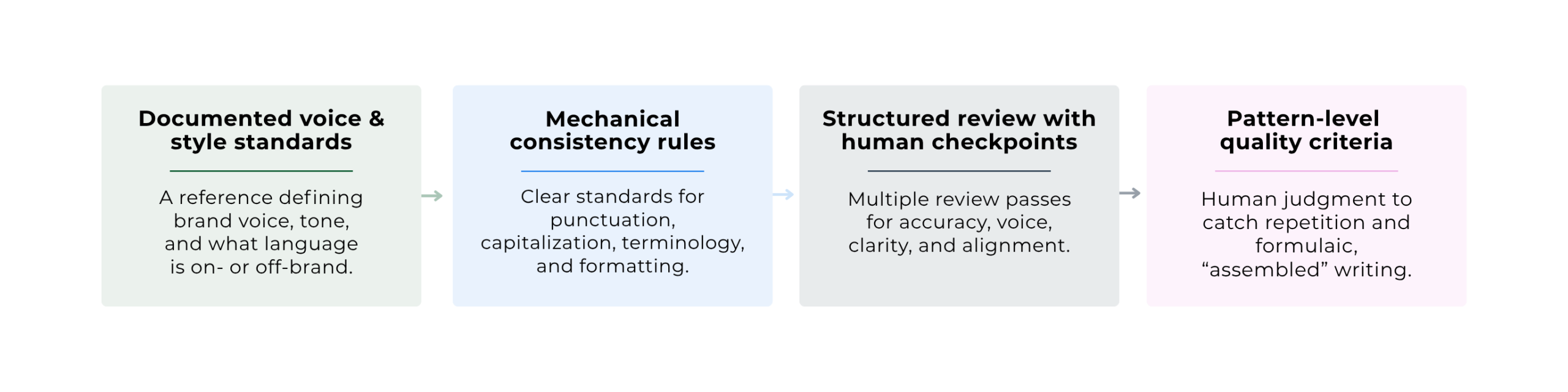

A tone-of-voice PDF that no one has opened since it was created does not count. What counts is a working system, run by people with editorial judgment, that shapes content before it reaches your audience. That system needs four things:

1. Documented voice and style standards

This is a comprehensive reference document rather than a simple paragraph about tone. It specifies how the brand sounds across different content types and channels, what language is on-brand and what is off-limits, and where the boundaries sit. It has to be detailed enough that two different writers working from the same guide can produce content that reads like it came from the same company.

2. Mechanical consistency rules

This includes punctuation conventions, capitalization standards, approved terminology, and formatting preferences. In isolation, these feel rather basic and none feel urgent. Across dozens of pieces of content, they are the difference between a brand that reads as polished and one that reads as careless. These are also the easiest standards for AI to follow, which makes them the most logical starting point for any team adopting AI-assisted workflows.

3. A structured review process with human checkpoints

Too many teams rely on a single editorial pass where the reviewer catches whatever they happen to catch. A better approach breaks the review into stages: accuracy, then brand and voice compliance, then clarity, then strategic alignment. Each stage has different criteria, and that separation is what keeps things from getting missed. One editor can run all four passes, but only if the process is defined well enough that they know what they are looking for at each one.

4. Pattern-level quality criteria, enforced by people who know what to look for

This is where most teams have nothing at all. Content with perfect grammar and punctuation can still fall into patterns that make it feel templated, even when the information is solid. The same sentence structures show up again and again, and the same rhythms repeat. The result reads like it was assembled, not written. No style guide covers how to deal with this, and most editorial processes do not account for it. Identifying these patterns requires the kind of editorial instinct that comes from reading widely and editing critically. It is inherently human work, and it is the single biggest quality gap in AI-assisted content today.

If AI is part of your content marketing plan this year, this is the part most teams skip.

Why This Gets Better Over Time

Editorial standards get more valuable the longer they are in place, but not in the way most people expect.

The obvious payoff is operational. Voice guidelines mean fewer rewrites, and a structured review process means fewer rounds before sign-off. Pattern-level criteria mean your editor stops catching the same problems over and over because the writers stop making them.

The less obvious payoff is what happens to the people running the system. An editor who has been working within a well-defined set of standards for six months has a feel for the brand that no onboarding document can replicate. They know which phrases land and which ones drift. They can read a draft and tell you in two minutes whether it sounds right. That instinct gets sharper with every piece they touch, and it is the thing that disappears first when companies start treating editorial work as overhead to reduce.

Without this kind of infrastructure, adding AI to your content operation is like hiring ten new writers but handing none of them a style guide. The output goes up while the quality stays completely flat. And the time your team spends fixing drafts quietly eats the efficiency gains you thought AI would deliver.

The marketing trends shaping 2026 keep reinforcing the same point: buyers trust brands that sound like themselves. Whether your team has the editorial infrastructure to deliver on that, or whether you are just producing more of what was not working before, is worth an honest conversation.

If you are ready to talk about it, we would love to hear from you.

FAQs

Why isn't AI improving my content quality?

Because AI writes based on whatever inputs you give it. If your voice guidelines are vague, your style rules are informal, and your review process is "whoever has time takes a look," AI just amplifies those problems faster. The tool is not the issue. The system around it is.

How do I maintain brand voice when using AI writing tools?

Document the voice in enough detail that someone unfamiliar with the brand could follow it. That means specifics: what language is on-brand, what is off-limits, how the tone shifts by channel. Then make sure every AI-assisted draft goes through a human review that checks voice as its own step, not lumped in with grammar and spelling. AI follows mechanical rules well. It cannot tell you whether something sounds exactly like your company.

What are editorial standards for content marketing?

They are the documented rules and processes that define how content gets written, reviewed, and approved before it goes out. At a minimum, that includes voice and style guidelines, mechanical rules (punctuation, terminology, formatting), a structured review process with defined stages, and pattern-level quality criteria that catch the subtler problems like repetitive structure and formulaic rhythm. Without them, quality depends entirely on whoever happens to be reviewing the draft that day.

What should a content review process include?

Break it into stages instead of relying on one pass. First, check mechanical accuracy. Second, check brand and voice compliance. Third, evaluate clarity and structure. Fourth, ask whether the piece actually serves its purpose and says something worth publishing. One editor can handle all four, but only if each stage is defined clearly enough that they know exactly what they are evaluating at each one.